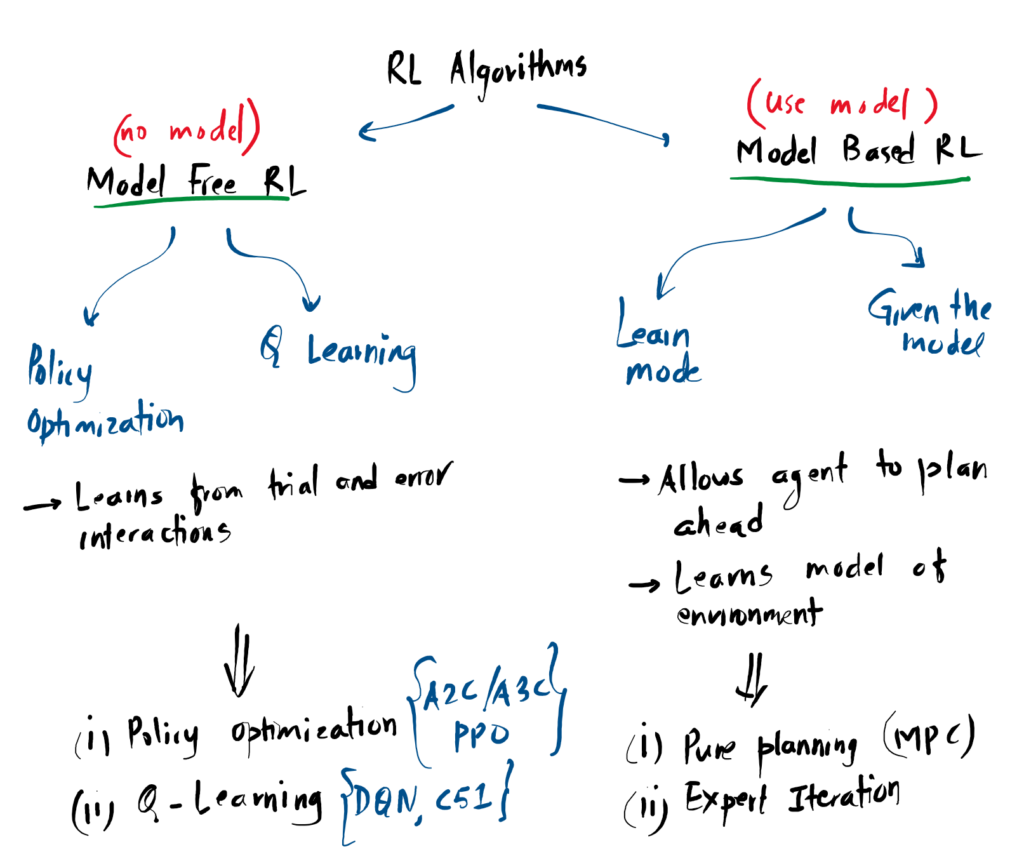

The Two Paths: Model-Free vs. Model-Based

In Reinforcement Learning, the term “Model” is used to describe the rules of the environment. Specifically, a model consists of the Transition Dynamics (where an action takes you) and the Reward Function (what score that action earns). The biggest divide in algorithm design is whether the agent attempts to learn these rules, or simply ignores them.

Model-Free RL Direct Learning

In Model-Free algorithms, the agent does not try to understand how the environment works. It simply plays the game, figures out which actions lead to high scores, and memorizes those behaviors.

What it actually learns:

- A Policy (π): A direct mapping from a state to an action (“When I see a wall, I jump”).

- A Value Function (V/Q): An estimate of how many points it will get for jumping.

Trade-off: It requires millions of real-world trials to learn, but it is highly stable and won’t be fooled by a “broken” understanding of the world.

Model-Based RL Planning & Simulation

In Model-Based algorithms, the agent acts like a scientist. It uses its real-world experience to construct an internal simulation (a Model) of the environment. Once the model is built, the agent can “pause” reality and simulate thousands of possible futures to pick the best move.

What it actually learns:

- Transition Dynamics P(s’|s,a): The physical rules (“If I jump, gravity brings me down”).

- Reward Model R(s,a): The scoring rules (“Grabbing a coin gives +1”).

Trade-off: It is incredibly data-efficient because it can practice in its own head. However, if the agent learns a flawed model (e.g., it thinks gravity works sideways), its planning will completely fail in the real world.

References

-

[1]

“Types of Reinforcement Learning Algorithms,” Weights & Biases (W&B), 2026.

https://wandb.ai/byyoung3/ML_NEWS3/reports/Types-of-reinforcement-learning-algorithms—VmlldzoxMzI4NjgzMw -

[2]

“Part 2: Kinds of RL Algorithms — Spinning Up documentation,” Openai.com, 2018.

https://spinningup.openai.com/en/latest/spinningup/rl_intro2.html